- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

In total, NHTSA investigated 956 crashes, starting in January 2018 and extending all the way until August 2023. Of those crashes, some of which involved other vehicles striking the Tesla vehicle, 29 people died. There were also 211 crashes in which “the frontal plane of the Tesla struck a vehicle or obstacle in its path.” These crashes, which were often the most severe, resulted in 14 deaths and 49 injuries.

Driving should not be a detached or “backseat” experience. You are driving a 2-ton death machine. All the effort to make driving a more entertaining or laid-back experience have completely ruined people’s respect for driving.

I would almost argue that you should avoid being relaxed while driving. You should always be aware that circumstances can change at any time and you shouldn’t be coerced into thinking otherwise.

That is one of the key takeaways from years of safety engineering studies. True aviation autopilot studies have developed rigorous systems to avoid just this kind of problem.

Tesla took the name, but threw away all the rigorous procedures, when they built there system.

You sound like a fan of the Tullock Spike

I mean, I drive a Miata, so in a lot of ways, yeah. If I get into an accident with pretty much any modern truck/SUV, I’ll be the loser.

The article does a good job breaking down the issues with Tesla’s Auto Pilot, including the fact that it’s a misleading title and has some pretty significant flaws giving people a false sense of confidence in its capabilities.

But raw crash statistics are absolutely meaningless to me without context. Is 956 crashes and 29 deaths more or less than you would expect from a similar number of cars with human drivers? What about in comparison to other brands semi-autonomous-driving systems?

Driving is an inherently unsafe process, journalists suck at conveying relative risks, probably because the average reader sucks at understanding statistical risk, but there needs to be a better process for comparing systems than just "29 people died’.

At least in 2023, Teslas had more crashes per car than any other automaker. There were only three automakers with over 20 crashes per 1000 cars - Tesla, Ram, and Subaru. And Tesla was at the top of the list, with 23.54 per 1000. The next highest was Ram with 22.76 per 1000.

NHTSA acknowledges that its probe may be incomplete based on “gaps” in Tesla’s telemetry data. That could mean there are many more crashes involving Autopilot and FSD than what NHTSA was able to find.

You seem to be pretending that these numbers are an overestimate. But the article makes clear. This investigation is a gross underestimate. There are many, many more dangerous situations that “Tesla Autopilot” has been in.

Driving is an inherently unsafe process, journalists suck at conveying relative risks, probably because the average reader sucks at understanding statistical risk, but there needs to be a better process for comparing systems than just "29 people died’.

This is 29 people died while provably under Autopilot. This isn’t a statistic. This was an investigation. Your treatment of this number as a “statistic” actually shows that you’re not fully understanding what NHTSA accomplished here.

What you want, a statistical test for how often Autopilot fails, is… well… depending on the test, as high as 100%.

https://www.youtube.com/watch?v=azdX_6L1SOA

100% of the time, Tesla Autopilot will fail this test. That’s why Luminar technologies used a Tesla for their live-demonstration at CES Vegas, because Tesla was so reliably failing this test it was the best one to pair up with their LIDAR technology as a comparison point.

Tesla Autopilot is an automaton. When you put it inside of its failing conditions, it will fail 100% of the time. Like a machine.

You seem to be pretending that these numbers are an overestimate

My point isn’t that it’s an overestimate or an underestimate. I trust the NHTSA numbers.

The question is how many people would have died driving similar routes/distances. No technology is perfect, and Tesla has plenty of room for improvement, but my question is: is it safer to use systems like Autopilot or just drive manually.

The article headline makes it sound like autonomous driving is dangerous, but driving is dangerous. Articles like this cover the absolute numbers without contextualizing whether or not those numbers are above or below the ‘norm’.

Tesla Autopilot is an automaton. When you put it inside of its failing conditions, it will fail 100% of the time. Like a machine.

Sure, I wasn’t trying to insinuate Tesla’s system was any good at all, let alone some sort of infallible program. Honestly I wouldn’t have even mentioned Tesla, except its the subject of the post, my statements are just about how bad we are about covering comparative risk of alternatives to risky systems.

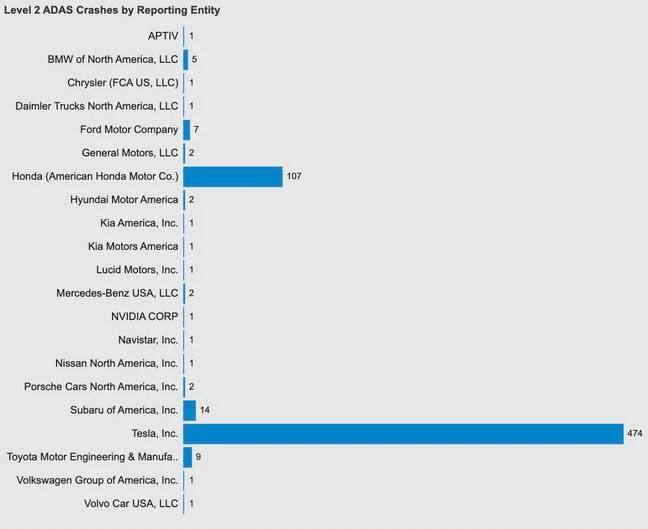

How about this. Lets take ADAS usage from other companies, and compare them to Tesla?

Oh right. No one else is dying anywhere close to Tesla. As it turns out, no other car jerks you out of a lane and into a center-median killing everyone inside.

You’re not up against other “humans”. Its 2024. Many cars have ADAS systems, lane centering systems, etc. etc. In fact, its Tesla who has fallen behind. Tesla doesn’t even have RADAR anymore, and typical Toyotas and Subarus (even cheap ones) all have lane centering, emergency braking.

And when we’re in parking lots (where most accidents occur, albeit non-fatal ones but still costly), other vehicles have ultrasonics, cross-lane detection, and 360 cameras to see all around us.

No one else’s ADAS systems have anywhere close to the reported number of errors, crashes, or deaths as Tesla has.

You realize you’re still proving my point, we as a society suck at differentiating between absolute numbers and statistics.

Again, I have no love for Teslas, but anecdotes of Autopilot failures are not proof that it’s less safe than humans. It’s proof that they need to improve their system, and your comments and the article you shared list a bunch of ways they can do that.

Having more failures than other ADAS is inching towards the right comparative analysis, but how many Teslas are on the road vs other ADAS cars? If there’s 10x as many Teslas as any other manufacturer, then it’s probably not shocking that they have more failures. (Also, citation needed).

Again, for the last time, I’m not saying you’re wrong, I’m saying the data and anecdotes as presented lack the contextualization to show how much worse Tesla’s implementation is than humans or other manufacturers ADAS. I’m not defending Tesla, and I’m sure the data is out there, but it’s not here in your post to back up your case against Tesla.

Are they 10%, 50%, 100% more or less likely to crash per mile driven? Do they have more or fewer fatalities per crash incident when using Autopilot? Yes, autopilot drives off road, crashes into tree is bad. But so is driver falls asleep, drives off road, crashes into tree.

Unfortunately driving is an inherently unsafe system. In 2022, there were over 42,000 fatal car crashes in the US. Is Tesla making this better or worse? Just because they are causing accidents doesn’t mean they’re causing more accidents.

Just because they are causing accidents doesn’t mean they’re causing more accidents.

These statistics literally show Tesla vehicles literally causing more accidents than other ADAS systems.

but how many Teslas are on the road vs other ADAS cars?

Mobileye has 170 million vehicles under its ADAS system across Nissan, BMW, Volkswagen, Ford, Toyota, Porsche, and … early versions of Tesla (back when Tesla Autopilot 1 was performing better than this new crap that’s come out more recently).

Tesla isn’t even the #1 manufacturer of ADAS systems. Mobileye is. The only reason Tesla ever went into this Autopilot / FSD crap was because Mobileye fired them as a customer because Mobileye didn’t like how Tesla’s advertisements oversold the capabilities. And now Tesla has a shittier ADAS system that they pretend is better than their competitors, even though Tesla can’t even figure out how to integrate RADAR and Ultrasonic systems like Mobileye can.

They don’t want a statistical test for how often autopilot fails. They want the investigation contextualized. 29 people died over 5 years due to tesla autopilot. About 35,000 to 43,000 people die in car accidents in the US every year. Without proper contextualization, I can’t tell if Tesla autopilot is doing great or awful.

TheRegister compiled this count back in 2022, but Tesla’s issues continue until today. Reported ADAS crashes for Tesla are an abnormal outlier. Anyone looking at the reports can see something is grossly wrong here.

That’s also just raw numbers without contextualization. How many miles of what kind were driven by each of those cars’ autopilot like systems? If this is over the lifetime of the vehicles, I imagine tesla has more as they had a head start on including these types of features. But maybe the other companies make it up in volume? Or maybe the tesla’s adas is engaged in more miles and in more dangerous situations than other competitors and do much better in those situations than them and humans. I don’t know at all. And the graphs and investigations don’t tell me.

Y’all are overcomplicating this. Death car kills people because asshole CEO lies about how safe it is.

There is footage of Tesla’s autopilot crashing into medians and driving on the wrong isde of the road right now.

As I said earlier: it’s an automation. An automaton. When this ‘Autopilot’ or ‘Full Self Diving’ gets placed in front of still objects (like Firetrucks with their sirens on), the damn thing crashes into them. It’s clearly fucking blind vs still objects and no one at Tesla has figured out how to solve that yet.

Still median? Crash.

Still firetruck on the side of the road? Crash

Still balloon in the shape of a child at live CES / Luminary tech demo? Crashes every time.

It’s a god awful system that is only saved because of human intervention in these cases. When Tesla ASDS fails, it’s near certain and repeatably fails.

Despite that fact, we have a CEO lying trying to convince people otherwise of this automations capabilities.

Now we have an NHTSA investigation into deaths and crashes, and the fanbase is still pretending the emperor has clothes on.

There’s video footage of people doing the same stupid shit and worse. Constantly. Is it better than people, yes or no? Is it better than its competitors? Those are fundamental questions. Obviously, it could be better. But is it already better than anything else? Raw numbers don’t tell you. Videos of it doing idiotic things don’t tell you. Properly contextualization and comparison does. No one in these comments is arguing it is better than anything else, just that the data we have doesn’t tell us anything.

Is it better than people, yes or no?

Can people regularly stop vs a balloon child in broad daylight?

https://www.youtube.com/watch?v=azdX_6L1SOA

Answer: Tesla fails this test 100% of the time. That’s why its a tech demo for Luminar tech, because they’re selling LIDAR units to the public. They chose Tesla because it reliably fails vs these balloon children.

Sheesh. You don’t understand the argument and/or discussion in question. Don’t be defensive, and instead try to understand the argument.

I think they’re looking for number of accidents/fatalities per 100k miles, or something similar to compare to human accidents/fatalities. That’s a better control to determine comparatively how it performs.

Tesla Autopilot is an automaton. When you put it inside of its failing conditions, it will fail 100% of the time. Like a machine.

So are people. People are laughably bad at driving. Completely terrible. We fail under regular and expected conditions all the time. The question, is whether the automated driving system (Tesla or not) does it better than people.

Can’t make an omelette without killing a few people

- Elon Musk