Whisper is open source. GPT-2 was, too.

Whisper is open source. GPT-2 was, too.

Absolutely this. Phones are the primary device for Gen Z. Phone use doesn’t develop tech skills because there’s barely anything you can do with the phones. This is particularly true with iOS, but still applies to Android.

Even as an IT administrator, there’s hardly anything I can do when troubleshooting phone problems. Oh, push notifications aren’t going through? Well, there are no useful logs or anything for me to look at, so…cool. It makes me crazy how little visibility I have into anything on iPhones or iPads. And nobody manages “Android” in general; at best they manage like two specific models of one specific brand (usually Samsung or Google). It’s impossible to manage arbitrary Android phones because there’s so little standardization and so little control over the software in the general case.

I posted some of my experience with Kagi’s LLM features a few months ago here: https://literature.cafe/comment/6674957 . TL;DR: the summarizer and document discussion is fantastic, because it does not hallucinate. The search integration is as good as anyone else’s, but still nothing to write home about.

The Kagi assistant isn’t new, by the way; I’ve been using it for almost a year now. It’s now out of beta and has an improved UI, but the core functionality seems mostly the same.

As far as actual search goes, I don’t find it especially useful. It’s better than Bing Chat or whatever they call it now because it hallucinates less, but the core concept still needs work. It basically takes a few search results and feeds them into the LLM for a summary. That’s not useless, but it’s certainly not a game-changer. I typically want to check its references anyway, so it doesn’t really save me time in practice.

Kagi’s search is primarily not LLM-based and I still find the results and features to be worth the price, after being increasingly frustrated with Google’s decay in recent years. I subscribed to the “Ultimate” Kagi plan specifically because I wanted access to all the premium language models, since subscribing to either ChatGPT or Claude would cost about the same as Kagi, while Kagi gives me access to both (plus Mistral and Gemini). So if you’re interested in playing around with the latest premium models, I still think Kagi’s Ultimate plan is a good deal.

That said, I’ve been disappointed with the development of LLMs this year across the board, and I’m not convinced any of them are worth the money at this point. This isn’t so much a problem with Kagi as it is with all the LLM vendors. The models have gotten significantly worse for my use cases compared to last year, and I don’t quite understand why; I guess they are optimizing for benchmarks that simply don’t align with my needs. I had great success getting zsh or Python one-liners last year, for example, whereas now it always seems to give me wrong or incomplete answers.

My biggest piece of advice when dealing with any LLM-based tools, including Kagi’s, is: don’t use it for anything you’re not able to validate and correct on your own. It’s just a time-saver, not a substitute for your own skills and knowledge.

Is this legit? This is the first time I’ve heard of human neurons used for such a purpose. Kind of surprised that’s legal. Instinctively, I feel like a “human brain organoid” is close enough to a human that you cannot wave away the potential for consciousness so easily. At what point does something like this deserve human rights?

I notice that the paper is published in Frontiers, the same journal that let the notorious AI-generated giant-rat-testicles image get published. They are not highly regarded in general.

DuckDuckGo is an easy first step. It’s free, publicly available, and familiar to anyone who is used to Google. Results are sourced largely from Bing, so there is second-hand rot, but IMHO there was a tipping point in 2023 where DDG’s results became generally more useful than Google’s or Bing’s. (That’s my personal experience; YMMV.) And they’re not putting half-assed AI implementations front and center (though they have some experimental features you can play with if you want).

If you want something AI-driven, Perplexity.ai is pretty good. Bing Chat is worth looking at, but last I checked it was still too hallucinatory to use for general search, and the UI is awful.

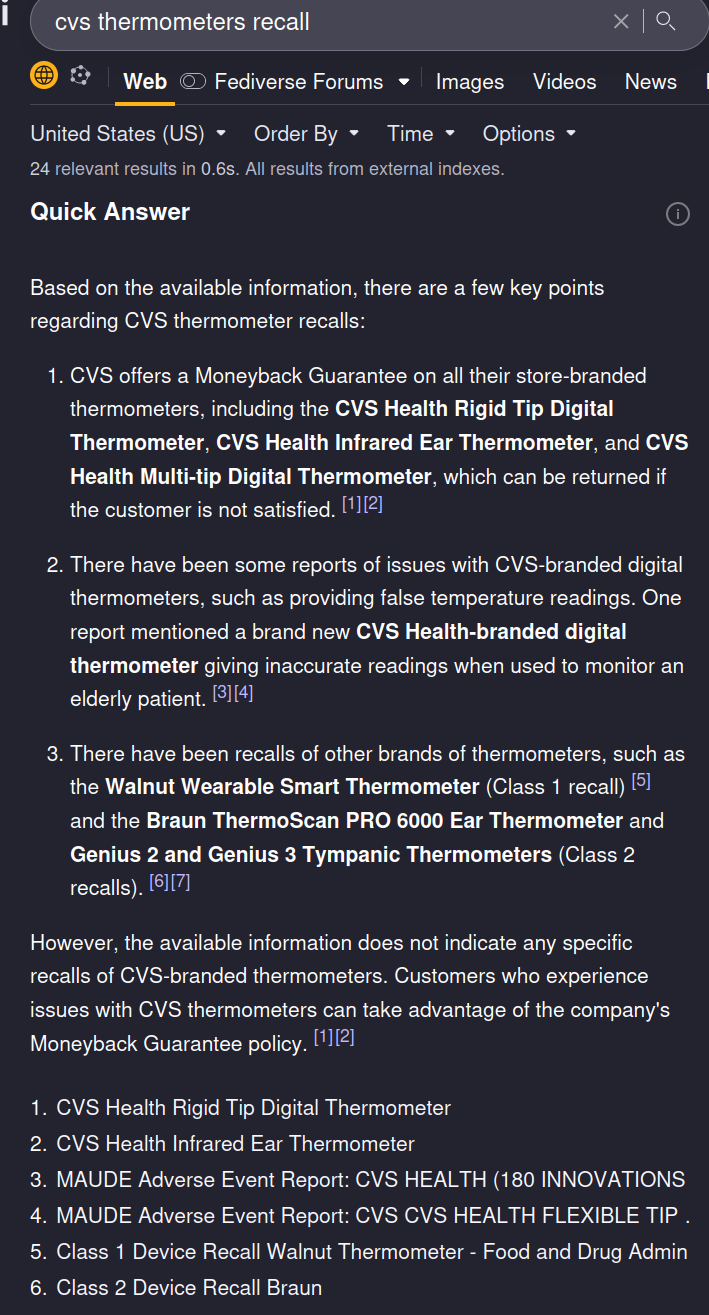

I’ve been using Kagi for a while now and I find its quick summaries (which are not displayed by default for web searches) much, much better than this. For example, here’s what Kagi’s “quick answer” feature gives me with this search term:

Room for improvement, sure, but it’s not hallucinating anything, and it cites its sources. That’s the bare minimum anyone should tolerate, and yet most of the stuff out there falls wayyyyy short.

I recently upgraded to a 7900 XTX on Debian stable, as well. I’m running the newest kernel from Debian’s backports repo (6.6, I think), and I didn’t have that same problem.

I did have other problems with OpenCL, though. I made a thread about this and solved it with some trouble. Check my post history if you’re interested. I hope it helps. I can take a closer look at my now-working system for comparison if you have further issues.

IT WORKS NOW! I will need time to run additional tests, but the gist of my solution was:

Backport llvm-18 from sid following the guide you linked at https://wiki.debian.org/SimpleBackportCreation

After compiling and installing all those deb files, I then installed the “jammy” version of amdgpu-install_6.0.60002-1.deb from https://www.amd.com/en/support/linux-drivers

Downloaded the latest kernel sources from https://git.kernel.org/pub/scm/linux/kernel/git/firmware/linux-firmware.git, and simply copied all the files from its lib/firmware/amdgpu folder into my system’s /lib/firmware/amdgpu. Got that idea from https://discussion.fedoraproject.org/t/amdgpu-doesnt-seem-to-function-with-navi-31-rx-7900-xtx/72647

sudo update-initramfs -u && sudo reboot

I’m not totally sure it step 3 was sane or necessary. Perhaps the missing piece before that was that I needed to manually update my initramfs? I’ve tried like a million things at this point and my system is dirty, so I will probably roll back to my snapshot from before all of this and attempt to re-do it with the minimal steps, when I have time.

Anyway, I was able to run a real-world OpenCL benchmark, and it’s crazy-fast compared to my old GTX 1080. Actually a bigger difference than I expected. Like 6x.

THANKS FOR THE HELP!

Thanks for the links! I’ve never attempted making my own backport before. I’ll give it a shot. I might also try re-upgrading to sid to see if I can wrangle it a little differently. Maybe I don’t actually need mesa-opencl-ics if I’m installing AMD’s installer afterwards anyway. At least, I found something to that effect in a different but similar discussion.

Update: I upgraded to Sid. Unfortunately, mesa-opencl-icd depends on libclc-17, which uninstalls -18. So I can’t get OpenCL working while the correct libclc is installed.

No idea where to go from here. I’ll probably restore my Bookworm snapshot, since I don’t want to be on Sid if it doesn’t solve this problem.

Update: Running amdgpu-install did not provide those files. There were a few errors regarding vulkan packages when I attempted, I guess because it’s assuming Ubuntu repos. Trying with just opencl and not vulkan succeded, but still clinfo reported the missing files.

I don’t think I can get this working without a whole newer llvm.

Ah, somehow I didn’t see 18 there and only looked at 17. Thanks!

I tried pulling just the one package from the sid repo, but that created a cascade of dependencies, including all of llvm. I was able to get those files installed but not able to get clinfo to succeed. I also tried installing llvm-19 from the repo at https://apt.llvm.org/, with similar results. clinfo didn’t throw the fatal errors anymore, but it didn’t work, either. It still reported Number of devices 0 and OpenCL-based tools crashed anyway. Not with the same error, but with something generic about not finding a device or possibly having corrupt drivers.

Should I bite the bullet and do a full ugprade to sid, or is there some way to this more precisely that won’t muck up Bookworm?

Can you explain more about your workflow? Do the Nix packages have their own isolated dependency resolution? How does it work when Debian packages depend on a library you get from Nix, or vice-versa?

Thanks, that’s good advice. There are lower-numbered gfx* files in there. 900, 902, 904, 906. No 1030 or 1100. Same after reinstalling.

Looks like these files are actually provided by the libclc-15 package. libclc-16 has the same set of files. Even libclc-17 from sid has the same files. So I guess upgrading to testing/unstable wouldn’t help.

apt-file search gfx1100-amdgcn-mesa-mesa3d.bc yields no results, so I guess I need to go outside of the Debian repos. I’ll try the AMD package tonight.

“Smart” may as well be synonymous with “unpredictable”. I don’t need my computer to be smart. I need it to be predictable, consistent, and undemanding.

I’ve been using Kagi for a while, so I’ll post a few quick thoughts I had after reading the article, linked blog, and mastodon thread.

The one thing in the blog post I strongly disagree with is her statement that the summarizer is “the same old AI bullshit”. I think they just assumed that without actually testing it. The summarizer is fantastic, and is a great example of the right way to use LLMs. Its output comes entirely from the URL or file you specify. It does not hallucinate. You can ask it follow-up questions about the document, and again, its replies are limited in scope to what’s actually in that document. If you ask it something out of scope it’ll tell you that it can’t find that information in the document. This is great because it’s using the LLM for what LLMs are actually good for — complex language parsing — and not for what they’re bad for, like reasoning or information storage/retrieval. It’s actually quite difficult to misuse the summarizer. It’s straightforward and effective. This is Kagi’s killer feature, IMO.

I can’t speak as highly of its search-integrated AI features like FastGPT. They mostly take information from the contents of the first few search results, but they also seem to “fill in the blanks” more than I’d like. Sometimes it gives me information that is simply not in the sources that it cites. It’s not as bad as using ChatGPT (which hallucinates all day every day, and if it ever tries to cite source is hallucinates those, too) but it needs improvement.

That said, Kagi doesn’t shove the AI down your throat like you might think reading the blog post. These are separate features that need to be explicitly activated. Plain searches don’t return results from the LLMs. If you want that, you need to explicitly go to the assistant or trigger the “quick answer” feature on the search results page. EDIT: I just realized that this is not totally true as of a few weeks ago, when they added a feature to automatically trigger the “quick answer” blurb for search queries ending in a question mark. Perhaps that’s why Lori felt like it was over-emphasized.

Anyway, back to the summarizer, here is an example of it in action. I gave it the URL of the Mastodon post. I think this is an excellent result. I also asked it an unrelated followup question so you can see that it limits itself to the information in the URL. It will not make shit up!

The summarizer lets me download conversations in markdown form, so I’ll just paste it right in here so you can see.

Exploring https://hackers.town/@lori/112255132348604770

Assistant:

Key points:

Query:

What’s the capital of North Dakota?

Assistant:

The knowledge provided does not contain any information about the capital of North Dakota. The context is focused on an email exchange between the author and the CEO of Kagi search engine regarding criticism of the company.

Correct. This is also why Apple switched to zsh as the default shell over bash. They still ship Bash 3.2 in macOS, because from 4.0 on, Bash started using GPLv3 instead of GPLv2.

I’m not against the idea of creating proprietary software out of open-source software, if the license allows that. However, I am always against this practice of “closing the door behind you”.

Apple: builds their entire software ecosystem on free, open-source foundations.

Also Apple: better have a million euros if you want to even start distributing software.

The best use case for an external app store is free open-source software, like we have on the Android side with F-Droid. Apple stopped that before it even started. Jeez.

Bell Riots are coming this year. The Second American Civil War starts in 2026, which leads directly into WWIII.

From there, everything is pretty much terrible until warp drive is invented.

This is correct, albeit not universal.

KDE has a predefined schedule for “release candidates”, which includes RC2 later this month. So “RC1” is clearly not going to be the final version. See: https://community.kde.org/Schedules/February_2024_MegaRelease

This is at least somewhat common. In fact, it’s the same way the Linux kernel development cycle works. They have 7 release candidates, released on a weekly basis between the beta period and final release. See: https://www.kernel.org/category/releases.html

In the world of proprietary corporate software, I more often see release candidates presented as potentially final; i.e. literal candidates for release. The idea of scheduling multiple RCs in advance doesn’t make sense in that context, since each one is intended to be the last (with fingers crossed).

It’s kind of splitting hairs, honestly, and I suspect this distinction has more to do with the transparency of open-source projects than anything else. Apple, for example, may indeed have a schedule for multiple macOS RCs right from the start and simply choose not to share that information. They present every “release candidate” as being potentially the final version (and indeed, the final version will be the same build as the final RC), but in practice there’s always more than one. Also, Apple is hardly an ideal example to follow, since they’ve apparently never even heard of semantic version numbering. Major compatibility-breaking changes are often introduced in minor point releases. It’s infuriating. But I digress.

Also worth mentioning: you might still need to add the “most recent visit” column under the View menu. And if you dare to actually load any of those pages, they’ll move all the way to the top, and will not remain in their original location. It’s really annoying.